|

When the status indicates that your query is done, retrieve the data with get_statement_result and save it to a variable. ```CODE language-python``` print(scribe_statement(Id=query_id)) There is no use in trying to access your data if the key Status doesn't yet equal FINISHED. Insert this query ID into describe_statement to see the current status of your query. ```CODE language-python``` query_id = response One of the keys is Id, which is the universally unique ID of your query. The response is a dictionary, holding information about the request you just made. ```CODE language-python``` response = client.execute_statement( ClusterIdentifier='your-cluster', Database='your-database', DbUser='your-user', Sql='SELECT * FROM users ' # Insert your SQL query here ) You're now ready to execute a query, which will gather your data. ```CODE language-python``` import boto3 client = boto3.client('redshift-data', region_name='us-east-2', aws_access_key_id='your-public-key', aws_secret_access_key='your-secret-key') For region_name, you'll generally want to use the region in which your resources are already located, which you'll find at the beginning of the URL when logged into the Redshift console. Once installed, import the library and declare a client. ```CODE language-python``` pip install boto3 The AWS SDK for Python is called boto3, which you'll have to install. Even if you're going to use another language, the example should be clear enough for you to get an idea of how you can approach this. Through an SDK, you can run SQL queries to store your data in a variable within your code and then save the data stored in the variable as a CSV file. You can programmatically interact with AWS using one of their SDKs, which are available in many different programming languages, including JavaScript, Python, Node.js, and Ruby. Once you've saved your data to your S3 bucket, the easiest way to download it to your local machine is to navigate to your bucket and file in the AWS console, from where you can download it directly. You can find all options in the AWS Database Developer guide. Adding one of these compression options will significantly reduce your file size and hence make it quicker to download or send elsewhere. This will add quotes around every field in your data, which is another way of making sure that commas in your data don't lead to unexpected results. In such a case you might use, for instance, a pipe ( | ) instead. If your data contains commas, it may lead to unexpected results. The default character for CSV files is a comma. You'll want to do this in pretty much every scenario. This adds a row with column names at the top of your output file(s). A few ones that can be especially useful are: There are numerous other options that you can add to the above query to customize it to fit your needs. If you decide to use the method above, you can find your 12 digit account ID in the support center, by clicking on your account name in the navigation bar.įinally, the fourth line tells Redshift that you want your data to be saved as CSV, which is not the default. The third line is your authorization and is one of several ways in which you can authorize. You will need to have the write permission to be able to execute the query. The second line contains the TO clause, where you define the target S3 bucket path. Be aware that Redshift only allows a LIMIT clause in an inner SELECT statement. On the first line, you query the data you want to export. ```CODE language-sql``` UNLOAD ('SELECT * FROM your_table') TO 's3://object-path/name-prefix' IAM_ROLE 'arn:aws:iam:::role/' CSV The basic syntax to export your data is as below.

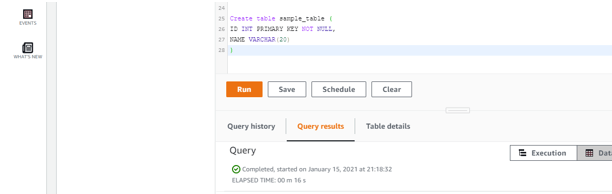

Once connected, you can start running SQL queries. Hover over it and proceed to the query editor, where you can connect to a database. If you log into the Redshift console, you'll see the editor button in the menu on the left. You can quickly export your data from Redshift to CSV with some relatively simple SQL. Regardless, we'll show you four different ways and let you pick what works best for you. Of course, the “best” heavily depends on your context and use case, whether that's pulling data for a business intelligence dashboard or helping to inform better segmentation in your next marketing campaign.

Did you know Redshift allows you to export (part of) your Redshift data to a CSV file? And if so, are you sure you know the best way to get it done? Think you know your way around Amazon Redshift? Chances are, since it’s so loaded with features, you’re probably just discovering the tip of the iceberg.Īnd even if you’re a superuser, there’s probably an easier way for you to do the day-to-day tasks you’ve come to master.Ĭase in point: Exporting CSV files. What you'll learn in this article: How to export a CSV From Redshift using four helpful methods for data analytics:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed